The AI assistant market has gotten crowded. Really crowded.

Three heavyweights dominate conversations right now: Anthropic’s Claude, OpenAI’s GPT series (ChatGPT), and the dark horse DeepSeek. But here’s the thing—asking which one is “best” is like asking whether a hammer, screwdriver, or wrench is the superior tool. It depends entirely on what you’re building.

I’ve spent months testing all three for different use cases. Some days I’m team Claude. Other days I can’t live without ChatGPT’s ecosystem. And occasionally, DeepSeek surprises me with responses that punch way above its weight class.

Comparative research across scientific computing, coding tasks, and general reasoning challenges suggests these models show distinct performance patterns. The differences aren’t trivial—they can mean the difference between a project that flows smoothly and one where you’re constantly fighting your AI assistant.

Let’s break down what actually matters.

Model Evolution: Where Each AI Stands Today

Understanding the current landscape requires knowing what version you’re actually dealing with. The naming conventions alone are confusing enough to make anyone’s head spin.

- OpenAI’s GPT lineup has evolved dramatically. The GPT-5 series is now consolidated under the ‘GPT-5.2’ and ‘GPT-5-mini’ naming convention. The legacy o1 and o3 models have been fully integrated into the GPT-5 reasoning core and are no longer offered as separate standalone selections in the ChatGPT interface as of early 2026.

- Anthropic’s Claude centers around Claude 4.5 Sonnet as the workhorse model. The model has earned particular praise for coding tasks, with multiple users reporting they use Claude “90% of the time” for development work.

- DeepSeek has released versions including DeepSeek V3 and the reasoning-focused DeepSeek R1. The R1 model specifically attempts to compete with OpenAI’s reasoning models by showing its “thinking” process—those long chains of reasoning that sometimes feel excessive but occasionally catch errors other models miss.

Reasoning and Intelligence: Who Thinks Better?

Here’s where things get interesting. And contentious.

Comparative research on scientific computing tasks reveals nuanced differences in how these models approach problem-solving. For mathematical reasoning and physics problems, performance varies significantly based on problem complexity and domain specificity.

Community discussions on Reddit show mixed real-world experiences. One user testing multiple models reported using different tools for different purposes, noting that consistency varies by use case and model selection.

That “hit or miss” characterization of DeepSeek comes up repeatedly. The model can deliver surprisingly sophisticated reasoning on complex problems, then stumble on simpler queries. It’s inconsistent in ways that make it risky for mission-critical work but intriguing for experimentation.

ChatGPT’s reasoning models (particularly o1) excel at structured problem-solving. They’re methodical, thorough, and rarely hallucinate wild answers. But they’re also slower and more expensive to run.

Claude takes a different approach—less explicit reasoning chains, more fluid responses. For many creative and analytical tasks, this feels more natural. Users report varied experiences with different models depending on the specific use case and task requirements.

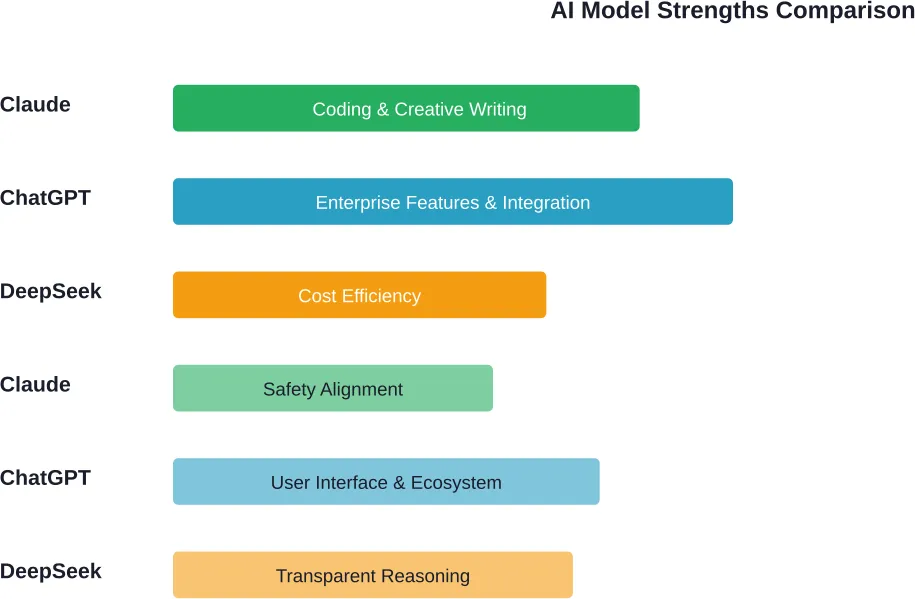

Primary and secondary strengths of each AI model based on benchmark testing and user feedback

Coding Ability: The Developer’s Perspective

Real talk: this is where the rubber meets the road for many professionals.

Multiple developers and numerous Reddit threads point to Claude as a leading choice for coding tasks. “For software, Claude is better by a mile,” one engineer wrote. “I use them both every day.”

Another developer running side-by-side tests noted that Claude handles their coding needs frequently, with occasional switches to ChatGPT or DeepSeek when needed. That circular pattern is a known Claude limitation—when it misunderstands requirements, it sometimes digs deeper into the wrong solution rather than stepping back.

Comparative research on scientific computing tasks identifies performance differences in code generation quality, debugging capabilities, and API design sensibility.

DeepSeek’s coding performance gets mixed reviews. One interesting observation from a developer: “DeepSeek tends to generate simpler, more straightforward code compared to Claude, which often leans towards more complex implementations.”

Is simpler better? Sometimes. If you’re prototyping or building something that doesn’t need to scale, DeepSeek’s straightforward approach saves time. But as another user pointed out, Claude’s more complex solutions often anticipate future scaling needs.

ChatGPT sits somewhere in the middle. It’s competent at coding but doesn’t inspire the same fierce loyalty Claude does among developers. Where ChatGPT shines is integration—GitHub Copilot, VS Code extensions, and enterprise development environments often default to GPT-based models because of OpenAI’s ecosystem dominance.

The Overengineering Problem

Here’s something nobody talks about enough: Claude sometimes overengineers solutions.

One developer expressed frustration that when asked for a simple login form, Claude provided a production-ready, enterprise-scale implementation with error handling, logging, security measures, and extensibility that wasn’t requested. Multiple users report similar experiences—ask Claude for a basic feature, get back a comprehensive solution.

Is this bad? Depends on your timeline and needs. For learning or building robust systems, it’s actually great. For quick prototypes or MVPs, it’s frustrating overhead.

Pricing: The Reality Check

Cost matters. Especially if you’re using these tools daily.

| Model | Free Tier | Paid Tier | Key Limitations |

|---|---|---|---|

| ChatGPT | GPT-5.2 access | $20/month (Plus), $200/month (Pro) | 50 messages per day on o3-mini-high for Plus users |

| Claude | Limited Claude 3.5 Sonnet | $20/month (Pro) | Usage limits reset periodically; message caps apply |

| DeepSeek | Free with rate limits | API pricing (significantly cheaper) | Service reliability issues; periodic downtime |

DeepSeek’s pricing advantage is massive. Users note that the service offers significantly lower costs compared to premium competitors.

However, here’s what the price comparison misses: availability. DeepSeek users consistently report that the service experiences periodic downtime and availability challenges. Free is only valuable if you can actually access the service when you need it.

Claude’s pricing feels reasonable at $20/month, but the usage limitations frustrate many users. Those limits reset periodically, but if you’re in a flow state working on a complex problem, hitting a wall mid-conversation kills productivity.

ChatGPT’s two-tier approach ($20 for Plus, $200 for Pro) creates an awkward middle ground. The 50-message weekly limit on o1 for Plus subscribers feels restrictive. As one user sharing a family subscription noted frustration with the message limit affecting multiple users in their household.

User Experience and Interface Design

This rarely shows up in benchmark comparisons, but it matters enormously in daily use.

ChatGPT offers the most polished, feature-rich interface. Custom instructions, GPTs (custom assistants), DALL-E image generation, web browsing, file uploads, Canvas for collaborative editing—it’s a full ecosystem. Users often praise ChatGPT’s general interface for its breadth of features.

Claude’s interface is cleaner but more minimal. It feels focused, which some users love and others find limiting. No native image generation. No elaborate custom GPT marketplace. Just conversation and artifacts (Claude’s collaborative workspace feature).

DeepSeek’s interface is functional. It gets the job done but lacks polish. For users accessing it through API rather than the web interface, this matters less.

Real-World Use Cases: What Works Where

Let’s get practical. What should you actually use for different tasks?

For Software Development

Claude 3.5 Sonnet is frequently chosen for development work. User feedback suggests using Claude as a primary tool, with ChatGPT or DeepSeek as alternatives when needed.

One developer’s workflow involves: starting with Claude, switching to GPT when needed, trying DeepSeek for comparison, and cycling back to Claude.

For Creative Writing

This one’s more subjective. Different users report different preferences for how various models handle creative content. One writer found a particular model produced natural writing output. Others appreciate different characteristics for creative work.

ChatGPT sits comfortably in the middle. It’s reliable and versatile but rarely anyone’s passionate favorite for creative work.

For Enterprise and Integration

ChatGPT wins by default. The ecosystem integrations, Microsoft partnerships, enterprise admin controls, and API stability make it the safe corporate choice. Claude is catching up with enterprise offerings, but OpenAI’s head start matters here.

For Cost-Conscious Experimentation

DeepSeek, obviously—if you can tolerate the availability issues and inconsistency. It’s legitimately impressive what they’ve achieved at their price point.

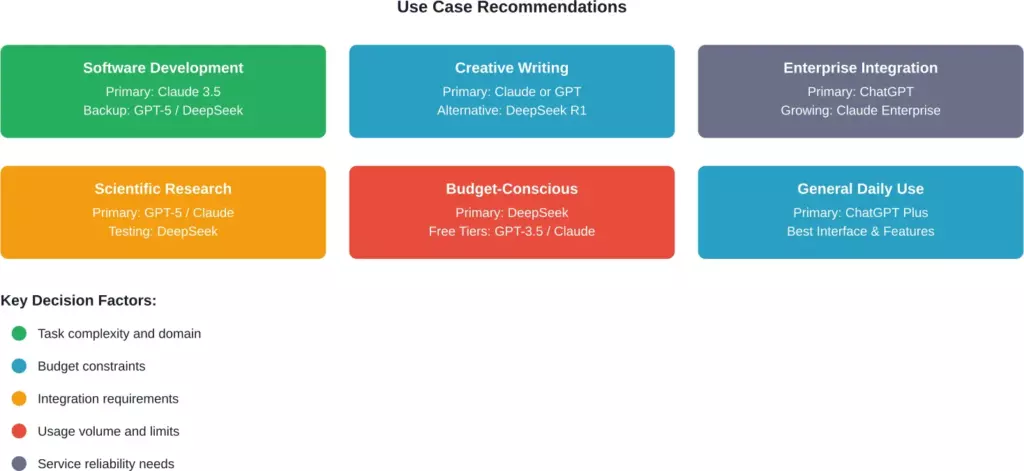

Strategic use case mapping based on model strengths and real-world user feedback

Training Data, Transparency, and Safety

This matters more than most people realize.

OpenAI has faced ongoing criticism about training data transparency. They’ve released a Model Spec outlining behavior guidelines. Updates to their Model Spec reinforce commitments to transparency and guidelines, though concrete details about training data remain limited.

Anthropic positions Claude as the safety-conscious choice. Their Constitutional AI approach attempts to build helpful, harmless, and honest behaviors into the model at a fundamental level. Whether this translates to meaningfully safer outputs in practice is debatable, but Claude does refuse harmful requests more consistently than some competitors.

DeepSeek’s origins have sparked both interest and concern. For some users, this is irrelevant. For others—especially in government, defense, or sensitive commercial contexts—it may present considerations regardless of technical performance.

Known Limitations and Weaknesses

Every model has blind spots. Let’s be honest about them.

Claude Limitations

- Can get stuck in circular reasoning patterns when it misunderstands requirements

- Restrictive usage limits even on paid tiers

- Can overcomplicate simple requests

- Limited ecosystem integrations compared to ChatGPT

ChatGPT Limitations

- Doesn’t inspire the same loyalty among developers that Claude does for coding

- Expensive Pro tier ($200/month) for unlimited advanced reasoning

- Message limits on o1 frustrate Plus subscribers

- Interface complexity can overwhelm new users

DeepSeek Limitations

- Availability issues and periodic downtime

- Inconsistent performance—strong on some tasks, weaker on others

- Weaker image-related capabilities

- Less polished interface and fewer features

- Concerns about data governance for sensitive use cases

What the Benchmarks Actually Tell Us

Benchmark comparisons flood the internet. Most are nearly useless for practical decision-making.

Research comparing DeepSeek with other major LLMs reveals that performance varies significantly depending on task specificity. An LLM that excels at mathematical reasoning might struggle with nuanced language tasks. One that generates brilliant code might produce awkward creative writing.

The LMSYS Chatbot Arena provides crowdsourced rankings based on user votes. DeepSeek-R1 appeared on their leaderboard with competitive scores, though leaderboard position doesn’t tell you whether a model will work for your specific needs.

What matters more than aggregate scores? Testing the models on tasks similar to your actual use cases. Spend a week using each one for your real work. That’ll teach you more than any benchmark.

The Community Verdict

Real users don’t pick one model and stick with it religiously. They mix and match.

A common pattern from Reddit discussions: use Claude as the primary tool for coding, keep ChatGPT for its ecosystem features and interface, try DeepSeek when you’re curious or testing something low-stakes, and switch between them when one gets stuck.

The most revealing comment reflects uncertainty: some believe that the market hasn’t settled, with each model having distinct advocates and legitimate strengths.

Making Your Decision

So what should you actually do?

Start by honestly assessing your primary use case. Are you coding daily? Claude deserves serious consideration. Need enterprise features and integrations? ChatGPT is the safe bet. Working with budget constraints and willing to tolerate inconsistency? DeepSeek might surprise you.

Consider trying multiple services simultaneously. Many professionals maintain subscriptions to both ChatGPT Plus and Claude Pro ($40/month total) and use them for different tasks. That’s cheaper than ChatGPT Pro alone and gives you flexibility.

Don’t overthink it. These tools evolve rapidly. The model that’s slightly behind today might leapfrog ahead next quarter. Stay flexible, test regularly, and switch when something better emerges.

| Your Priority | Best Choice | Runner-Up |

|---|---|---|

| Professional software development | Claude 3.5 Sonnet | ChatGPT |

| Enterprise deployment | ChatGPT | Claude Enterprise |

| Cost efficiency | DeepSeek | Free tiers of GPT/Claude |

| Overall versatility | ChatGPT Plus | Claude Pro |

| Creative writing assistance | Claude or ChatGPT | DeepSeek R1 |

| Safety and content filtering | Claude | ChatGPT |

Looking Ahead: What’s Next?

The pace of change in this space is absurd. GPT-5 has launched. Claude updates regularly. DeepSeek keeps releasing new versions that demonstrate competitive capabilities.

Generally speaking, the market is moving toward specialization rather than one model dominating everything. Anthropic focuses on safety and coding. OpenAI chases enterprise dominance and ecosystem lock-in. DeepSeek demonstrates that you don’t need infinite resources to compete.

The real winner might be users. Competition drives improvement and keeps prices within reasonable ranges. Five years ago, this level of AI capability would’ve seemed like science fiction. Today we’re evaluating which $20/month subscription offers better code generation.

That’s pretty remarkable when you think about it.

Final Thoughts

There’s no universal winner in the Claude vs GPT vs DeepSeek debate. Each model brings distinct strengths that matter differently depending on your specific needs, budget, and use cases.

Claude dominates coding tasks and earns loyalty from developers for good reason. ChatGPT offers the most complete ecosystem with enterprise features that matter for organizational deployment. DeepSeek delivers value for money if you can tolerate inconsistency and availability issues.

My honest recommendation? Stop searching for the “best” AI model. Start testing all three on your actual work. Spend a week with each. Pay attention to which one you naturally reach for when facing different challenges. That personal experience will guide you better than any benchmark or comparison article.

The AI landscape in 2026 is competitive and rapidly evolving. That’s good news for everyone except the companies trying to maintain dominant positions. For users, it means better tools, more choices, and continuing innovation.

Pick the model that works for you today. Stay open to switching tomorrow. And don’t get too attached—something better is probably launching next month.

Ready to test these models yourself? Start with free tiers of ChatGPT and Claude to get a feel for their interfaces and capabilities. If you’re serious about coding, consider trying Claude Pro. If enterprise features matter, explore ChatGPT’s team and enterprise offerings. And throw DeepSeek into the mix for experimentation. Your own experience will teach you more than anyone else’s opinion.

Frequently Asked Questions

Based on extensive developer feedback and comparative testing, Claude 3.5 Sonnet performs well for coding tasks. Developers frequently report using Claude for their development work, switching to ChatGPT primarily when they need specific integrations or ecosystem features. Claude excels at understanding complex codebases, generating clean implementations, and debugging. However, ChatGPT’s ecosystem advantages (GitHub Copilot, VS Code extensions) make it valuable for integrated development environments.

DeepSeek’s lower costs stem from several factors: aggressive optimization of their model architecture, different business model priorities, and different cost structures for compute resources. However, “cheaper” comes with tradeoffs—users report availability issues, inconsistent performance, and less polished interfaces. The cost advantage is real but doesn’t tell the whole story.

ChatGPT currently dominates enterprise deployments due to OpenAI’s extensive integrations with Microsoft products, established enterprise admin controls, and robust API stability. Claude Enterprise is emerging as an alternative, particularly for organizations prioritizing safety features and code-related workflows. DeepSeek faces adoption barriers in enterprise contexts due to reliability concerns. For most large organizations, ChatGPT remains the proven choice.

Using multiple AI assistants is increasingly common and often optimal. Many professionals maintain both ChatGPT Plus and Claude Pro subscriptions ($40/month total), using each for their respective strengths. Claude handles coding and analytical writing, ChatGPT provides ecosystem features and general versatility, and DeepSeek serves as an alternative for experimentation. The cost of multiple subscriptions is often justified by productivity gains and having alternatives when one model struggles with specific tasks. Don’t feel locked into a single choice.

Users should review the terms of each service regarding data handling. ChatGPT offers an enterprise tier with stronger data protections. Claude emphasizes privacy and safety. DeepSeek’s data handling may raise concerns for some organizations. For truly sensitive work, use enterprise tiers with explicit data protection agreements, run models locally if possible, or avoid inputting confidential information to cloud-based services.

Free tiers typically offer limited access to older models with slower response times and restrictive usage caps. Paid tiers ($20/month) generally provide access to newer, more capable models with priority processing and higher usage limits. ChatGPT Plus includes GPT-4 access and limited o1 usage. Claude Pro offers Sonnet 3.5 with usage limits. For professionals using these tools daily, paid tiers often justify their cost through time savings and better output quality.

Reasoning capabilities vary by problem domain. OpenAI’s o1 and o3 models are designed to excel at structured, mathematical reasoning. DeepSeek R1 shows competitive reasoning capabilities but with consistency variations. Claude approaches reasoning differently, with less explicit chain-of-thought but often natural integration. For specific benchmarks and domains, performance varies, and the best choice depends on your particular needs.