Quick Summary: AI talking photo ads transform static images into speaking video advertisements using facial animation, lip-sync, and voice generation. The market offers diverse solutions ranging from free tools to enterprise platforms, each tailored for different use cases from social media ads to brand campaigns. Choosing the right platform depends on realism needs, budget, integration requirements, and production volume.

Static photos in advertising don’t grab attention the way they used to. Scroll speed is brutal, and standing out requires movement, sound, and personality. That’s where talking photo AI steps in.

These platforms take a single portrait and animate it into a short video where the person speaks, blinks, and moves naturally. The technology behind this combines facial landmark detection, motion synthesis, voice cloning, and lip-sync algorithms—all powered by machine learning models trained on thousands of hours of video data.

The AI video market reflects this shift. According to industry projections, the sector is expected to reach $27.77 billion by 2030, growing at a 26.4% CAGR from 2023 to 2030. That growth is driven largely by demand for scalable video content, multilingual campaigns, and cost reduction in traditional production workflows.

But here’s the thing: not all talking photo tools are built the same. Some excel at realism but cost hundreds per month. Others offer free tiers but produce obvious “AI” motion. Some integrate performance analytics; others just export video files.

This list breaks down the most prominent AI solutions for creating talking photo ads in 2026, covering what they do, who they’re for, and what trade-offs you’ll face.

Predict Ad Performance Before Launch

Extuitive provides an AI-native platform that automates the creation and testing of digital advertisements. For businesses utilizing talking photo ads, this technology offers a way to predict audience engagement and performance before the media goes live. By using autonomous agents to simulate consumer behavior, it identifies which visual concepts and scripts will resonate most effectively with target segments, reducing the financial risk associated with unverified creative assets.

The platform includes the following features:

- Predictive modeling to forecast the effectiveness of talking photo assets

- Autonomous generation of ad variations based on product URLs

- Pre-launch testing using AI-powered consumer personas

- Automated audience discovery to match creatives with high-intent buyers

- Direct integration with e-commerce systems for rapid data synchronization

To start optimizing your creative strategy with predictive insights, you can purchase a subscription or schedule a demo at Extuitive.

What Makes a Talking Photo Ad Solution Effective?

Before diving into specific platforms, it helps to understand what separates effective tools from gimmicky ones.

Realism matters most. That means natural facial motion, accurate lip-sync to audio, proper eye movement, and head gestures that match speech patterns. Poor execution here triggers the uncanny valley effect—viewers notice something’s off and disengage.

Voice quality is the second pillar. Tools that support voice cloning or integrate with high-quality text-to-speech engines produce better results than those relying on robotic narration. The Federal Trade Commission has issued guidance on AI-enabled voice cloning, urging transparency when synthetic voices are used in commercial contexts.

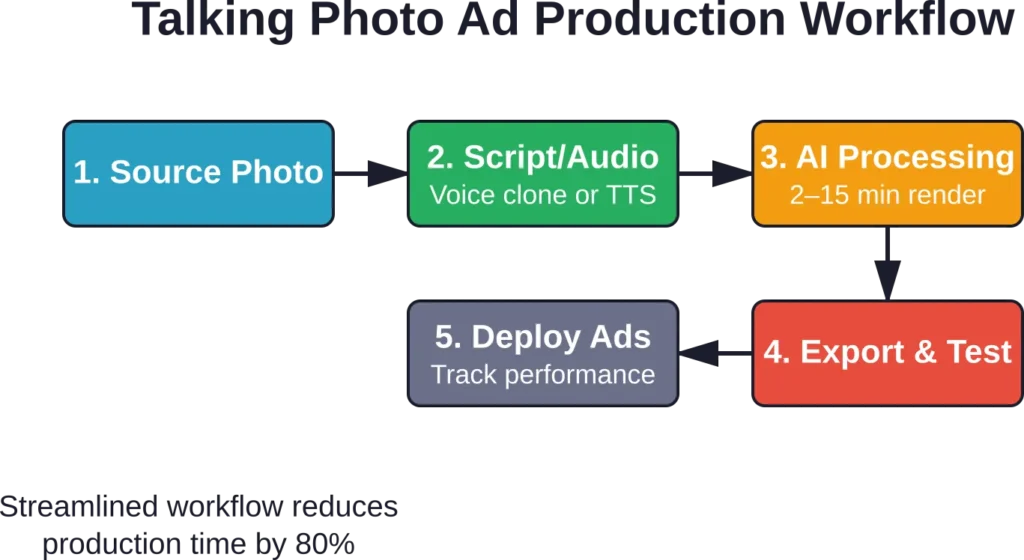

Production speed defines scalability. Platforms that batch-process videos, offer template libraries, and automate editing workflows cut production time significantly. Testing by multiple sources suggests standardized workflows can reduce production time by up to 80% compared to traditional shoots.

Integration depth affects ROI measurement. Solutions that connect with ad platforms, CRMs, or analytics dashboards allow marketers to track performance—views, clicks, conversions—without manual exports. Tools operating in isolation require additional steps and separate tracking systems.

Cost structure determines viability at scale. Free tiers work for testing; mid-range subscriptions ($30–$100/month) suit small teams; enterprise pricing (custom quotes) applies when volume exceeds hundreds of videos monthly or when API access is required.

Top AI Talking Photo Ads Platforms in 2026

The following platforms represent the current landscape, each addressing different segments and priorities.

HeyGen

HeyGen positions itself as an enterprise-grade solution for creating talking avatar videos from photos. The platform supports multilingual content, custom avatar creation from uploaded photos, and script-to-video workflows that handle everything from voice generation to subtitle placement.

Facial animation quality is high—motion feels fluid, and lip-sync accuracy stands out during testing. The tool works well for product demos, explainer videos, and brand spokesperson content.

HeyGen integrates with marketing automation platforms and offers API access for teams building custom workflows. Pricing isn’t publicly listed; it operates on a contact-sales model, which typically signals costs above $200/month for meaningful usage.

Best for: marketing teams producing multilingual campaigns at scale, agencies managing multiple clients, and brands needing consistent spokesperson videos without hiring talent.

Dupdub

Dupdub combines talking photo generation with broader AI media tools—voiceovers, subtitle generation, and video editing. The talking photo feature animates portraits with customizable expressions and supports both uploaded audio and text-to-speech input.

The interface emphasizes speed. Template selection, photo upload, script entry, and export happen in a linear workflow designed for users without video editing experience. Output quality is adequate for social media ads and internal communications, though realism doesn’t match higher-end platforms.

Dupdub offers a free tier with watermarked exports and limited minutes. Paid plans start affordably, making it accessible for solo creators and small businesses testing talking photo formats.

Best for: content creators producing social media ads quickly, small businesses needing occasional spokesperson videos, and teams prioritizing budget over photorealism.

Vidnoz

Vidnoz provides free talking photo creation with optional paid upgrades. The platform focuses on accessibility—no signup walls for basic features, fast rendering, and straightforward controls.

Animation quality sits in the mid-range. Motion feels slightly robotic compared to premium tools, but it’s sufficient for casual marketing, educational content, and personal projects. The tool supports multiple languages and includes a library of stock avatars for users who don’t want to upload their own photos.

Vidnoz monetizes through watermark removal, higher resolution exports, and commercial licensing. Free users can create unlimited videos but face branding overlays and 720p resolution caps.

Best for: educators creating lesson content, marketers testing talking photo formats before committing budget, and hobbyists experimenting with AI video tools.

Synthesys

Synthesys offers talking photo ads as part of a broader AI content suite. The platform emphasizes commercial licensing—all generated content is cleared for advertising, client work, and resale.

Facial animation quality is strong, with natural head movements and accurate lip-sync. Synthesys supports custom voice cloning, allowing brands to maintain consistent vocal identity across campaigns. The platform also includes stock photo libraries, eliminating the need to source or license portraits separately.

Pricing operates on credit systems. Users purchase monthly credits that deplete based on video length and features used. Mid-tier plans run around $50–$150/month depending on volume.

Best for: agencies producing client work, brands maintaining consistent spokesperson identity, and marketers needing clear commercial licensing for ad content.

LipSync.video

LipSync.video specializes in lip-sync accuracy. The platform takes photos and audio files (or text scripts) and generates videos where mouth movements precisely match speech patterns.

The tool prioritizes technical precision over additional features. There’s no built-in voiceover generator or editing suite—users bring their own audio and photos. That narrow focus results in superior lip-sync quality compared to all-in-one platforms.

LipSync.video works well for teams with existing audio assets or those using professional voiceover artists. The output integrates cleanly into broader video editing workflows.

Pricing is usage-based, charged per video minute. Cost predictability improves when volume is consistent month-to-month.

Best for: post-production teams adding lip-sync to existing content, brands with professional voiceover libraries, and developers building custom video applications via API.

Typecast.ai

Typecast combines talking avatar generation with an extensive voice library. The platform includes hundreds of voice options across languages, ages, and tones, allowing precise matching between avatar appearance and vocal characteristics.

Avatar creation supports photo uploads or selection from a stock library. Motion quality is good—natural enough for professional ads but not reaching the photorealism of premium platforms.

Typecast targets marketing teams producing high volumes of localized content. The voice library and multilingual support make it efficient for brands operating across regions.

Pricing starts around $30/month for basic plans, scaling up based on voice usage and export resolution.

Best for: international brands creating localized ads, e-learning companies producing training videos in multiple languages, and marketers needing diverse spokesperson personas.

Media.io

Media.io offers talking photo creation alongside video editing, format conversion, and media optimization tools. The talking photo feature is straightforward—upload a portrait, add text or audio, and export.

Animation quality is functional but not exceptional. The tool works for quick social media posts, email marketing videos, and internal communications where production value is secondary to speed.

Media.io includes a free tier with limited exports per month. Paid plans remove restrictions and add higher resolution options.

Best for: small businesses creating social media content quickly, marketers producing email campaign videos, and teams needing occasional talking photo outputs without ongoing subscriptions.

Pricing Structures Across Platforms

Cost models vary significantly, and understanding them prevents budget surprises.

Subscription tiers are most common. Basic plans ($10–$30/month) typically include limited video minutes, watermarked exports, and standard resolution. Mid-tier plans ($50–$150/month) remove watermarks, increase minute allowances, and add features like custom avatars or voice cloning. Enterprise plans (custom pricing) offer unlimited usage, API access, dedicated support, and white-label options.

Credit-based systems charge per video generated or per minute rendered. This works well for sporadic usage but can become expensive at high volumes. For reference, some platforms price credits around $9/month for basic packages with 150 credits, scaling to $39/month for 1,200 credits.

Usage-based API pricing suits developers and large operations. Costs are calculated per API call, video second, or processing unit. This model offers precision but requires careful monitoring to avoid runaway costs.

Free tiers exist but come with trade-offs—watermarks, resolution limits, queue delays, and restricted commercial licensing. Free tools work for testing and personal projects but rarely suit serious advertising campaigns.

| Pricing Model | Best For | Typical Range | Watch Out For |

|---|---|---|---|

| Monthly Subscription | Consistent production volume | $30–$150/month | Minute caps, feature restrictions per tier |

| Credit Packs | Sporadic usage, seasonal campaigns | $10–$100 per pack | Credit expiration dates, unclear cost-per-video |

| API/Usage-Based | High volume, custom integrations | $0.05–$0.50 per minute | Unpredictable costs without monitoring |

| Free Tier | Testing, personal projects | $0 | Watermarks, low resolution, licensing limits |

Realism vs. Speed Trade-offs

Higher realism requires more processing time and compute resources. Premium platforms using advanced neural rendering might take 5–15 minutes per video. Budget tools using simpler motion synthesis export in under 2 minutes but produce less convincing results.

That said, realism requirements depend on context. A luxury brand launching a high-budget campaign needs photorealistic output. A local business posting daily social media updates can accept slightly robotic motion in exchange for faster turnaround.

Real talk: diminishing returns kick in quickly. The jump from “clearly AI” to “pretty good” is huge. The jump from “pretty good” to “indistinguishable from real video” costs exponentially more in time, money, and processing power—and most audiences don’t notice the difference in a 15-second ad.

Performance Analytics Integration

Some platforms now bundle performance tracking directly into their workflows. These tools measure views, engagement rates, click-through rates, and conversions tied to specific talking photo ads.

Integration depth varies. Basic analytics might show view counts and play duration. Advanced systems connect with Facebook Ads Manager, Google Ads, or CRM platforms, attributing leads and sales back to individual videos.

This matters because measuring ROI on talking photo ads requires connecting creative output to business outcomes. Platforms operating in isolation force marketers to manually track performance across multiple dashboards, increasing error rates and slowing optimization cycles.

For teams running tests—comparing talking photo ads against static images or traditional video—built-in analytics streamline the process. A/B testing becomes easier when the video creation tool and the analytics dashboard share data automatically.

Regulatory Considerations for AI-Generated Ads

Transparency around synthetic media is becoming a regulatory focus. The Federal Trade Commission announced a crackdown on deceptive AI claims in September 2024, targeting operations that misuse AI technology or make misleading claims about AI capabilities.

In March 2026, the FTC announced that Air AI and its owners will be banned from marketing business opportunities to settle FTC charges the company misled many entrepreneurs and small businesses.

For marketers using talking photo ads, the takeaway is clear: disclose when content is AI-generated if viewers might reasonably assume it’s a real person. Platforms that offer disclaimers or labeling tools help maintain compliance.

Voice cloning raises additional concerns. The FTC’s Office of Technology published guidance on AI-enabled voice cloning on April 8, 2024, emphasizing the need for consent when using someone’s likeness or voice in commercial contexts.

Best practice: include subtle disclosures like “AI-generated spokesperson” in video descriptions or ad copy, especially when the avatar resembles a specific individual or uses a cloned voice.

Use Cases Beyond Traditional Advertising

Talking photo ads extend beyond paid social media campaigns. The same technology applies to multiple contexts.

E-learning platforms use talking avatars to create instructor personas without filming live lectures. This allows course creators to update content by re-rendering videos rather than scheduling new shoots.

Internal communications benefit from personalized spokesperson videos. HR departments create onboarding videos, executives deliver company updates, and training teams produce safety briefings—all using talking photo technology to maintain consistency without repeated filming.

Real estate listings gain engagement when property photos are paired with talking agent introductions. The same technique works for automotive sales, B2B product catalogs, and any vertical where visual products benefit from spoken explanations.

Customer service chatbots integrate talking avatars to humanize interactions. Instead of text-only responses, bots deliver answers via short video clips featuring a consistent brand representative.

Common Pitfalls and How to Avoid Them

Over-reliance on AI motion without editing produces repetitive content. The best results come from combining AI generation with light editing—trimming pauses, adding B-roll, adjusting pacing.

Poor audio quality undermines even the best facial animation. Invest in decent microphones or use high-quality text-to-speech engines. Viewers tolerate slightly robotic motion more easily than garbled audio.

Mismatched avatar-voice pairings break immersion. A young avatar with an elderly voice or a female face with a male voice creates cognitive dissonance. Match demographics carefully.

Ignoring platform-specific requirements wastes time. Social media platforms have distinct aspect ratio, length, and captioning standards. Generate videos in the correct format from the start rather than cropping and resizing afterward.

Skipping A/B testing leaves performance on the table. Run talking photo ads alongside traditional formats to measure lift. Not every audience responds the same way—data beats assumptions.

Selecting the Right Platform for Your Needs

Choosing a talking photo ads solution starts with defining priorities.

For teams prioritizing realism—luxury brands, corporate communications, high-value campaigns—invest in premium platforms with advanced neural rendering. The cost per video is higher, but output quality justifies it when brand perception matters.

For high-volume producers—agencies, e-learning companies, social media teams—prioritize speed and batch processing. Mid-tier platforms offering template libraries and fast render times maximize throughput.

For budget-conscious creators—small businesses, solo marketers, startups—free or low-cost tools provide acceptable quality for social media and email campaigns. Accept trade-offs in realism to keep costs down.

For technical teams building custom applications—SaaS companies, app developers, enterprise operations—API access is non-negotiable. Choose platforms offering developer-friendly documentation and predictable usage-based pricing.

For international campaigns, multilingual support and diverse voice libraries are critical. Platforms with extensive language coverage streamline localization.

| Priority | Recommended Platform Type | Key Features to Check |

|---|---|---|

| Maximum Realism | Premium/Enterprise | Neural rendering, custom avatars, voice cloning |

| High Volume | Mid-tier with templates | Batch processing, fast render, automation |

| Budget Constraints | Free/Low-cost | Flexible licensing, no hidden fees |

| Custom Integration | API-first platforms | Developer docs, usage-based pricing |

| Multilingual Campaigns | Global platforms | Voice library size, language coverage |

Future Trends in Talking Photo Technology

Realism will continue improving as models train on larger datasets. Expect better microexpressions, more natural idle motion, and fewer uncanny valley moments.

Real-time generation is emerging. Current platforms render offline, but real-time talking avatars are already appearing in live chat applications and virtual events. Latency is dropping as processing efficiency improves.

Emotion control will become standard. Next-generation tools will let creators specify emotional tone—excitement, empathy, urgency—and have the avatar’s facial expressions and vocal inflection adjust automatically.

Interactive talking photos are on the horizon. Instead of pre-rendered videos, viewers will interact with avatars that respond to questions or choices, blending video ads with conversational AI.

Regulatory frameworks will tighten. Expect clearer requirements around disclosure, consent, and licensing as governments catch up with synthetic media capabilities.

Frequently Asked Questions

Testing shows talking photo ads typically outperform static images in engagement metrics—longer view times, higher click-through rates, and better recall. But effectiveness depends on execution quality and audience context. Poor animation can reduce credibility, while well-produced talking ads increase perceived trustworthiness and message retention.

Yes, absolutely. Using someone’s likeness—photo or voice—for commercial purposes without consent violates publicity rights and can trigger legal action. Always obtain written permission or use stock photos with explicit commercial licensing. The FTC has emphasized transparency and consent requirements for AI-generated content featuring identifiable individuals.

Yes. B2B audiences respond well to talking photo ads in product demos, explainer videos, and personalized outreach. The format humanizes communication and conveys complex information more effectively than text alone. Test talking ads in email campaigns, LinkedIn ads, and website landing pages to measure lift against traditional formats.

Social media ads perform best between 15–30 seconds. Longer videos (60–90 seconds) work for landing pages and email campaigns where viewers are already engaged. Attention spans are short—deliver the core message in the first 5 seconds and provide clear next steps before the video ends.

1080×1080 (square) works for Instagram and Facebook feed posts. 1080×1920 (vertical) suits Stories and Reels. 1920×1080 (horizontal) is standard for YouTube and LinkedIn. Export in the platform’s native aspect ratio to avoid cropping and maintain quality. Most platforms compress uploaded videos, so start with the highest resolution the tool offers.

Yes, and this is one of the format’s biggest advantages. Generate one avatar photo, then create versions with different voice tracks for each language. This maintains visual consistency across markets while delivering localized messaging. Platforms with extensive voice libraries and text-to-speech in multiple languages streamline this process.

Free tools work for testing concepts and low-stakes content like internal communications or personal projects. For client-facing ads, paid platforms offer better realism, licensing clarity, and features like watermark removal and higher resolution. The cost difference between free and mid-tier plans ($30–$50/month) is typically justified by output quality improvements.

Conclusion

Talking photo ads represent a practical middle ground between static images and full video production. The technology has matured to the point where output quality is acceptable for most commercial applications, and costs have dropped enough to make the format accessible beyond enterprise budgets.

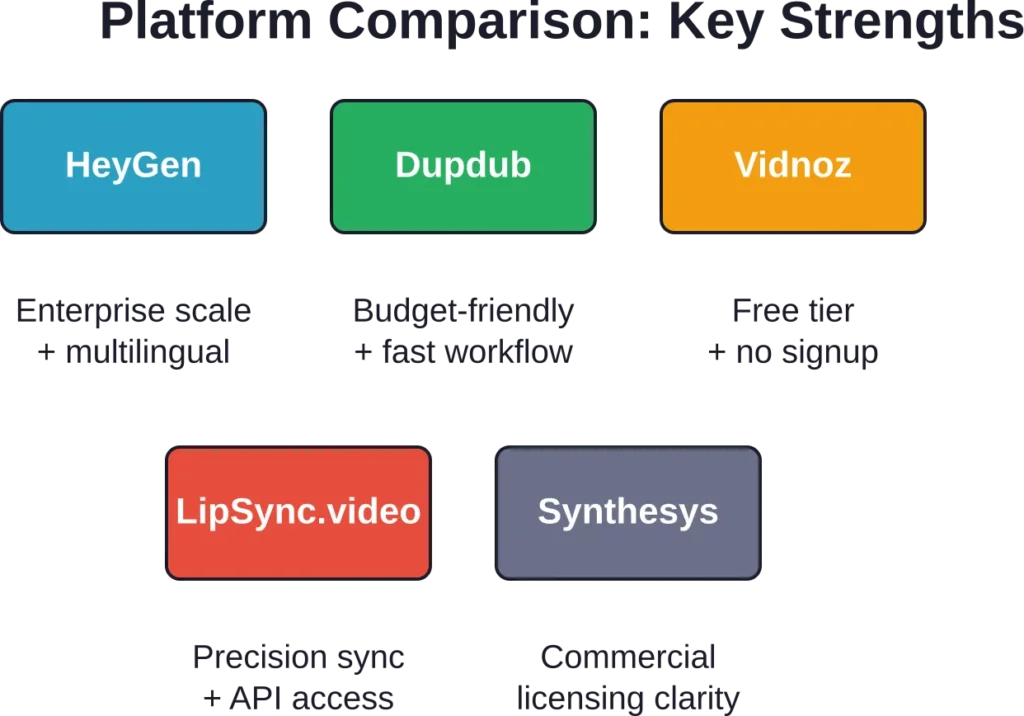

Platform selection hinges on priorities—realism, speed, cost, integration depth, or licensing clarity. No single tool dominates every category. HeyGen and Synthesys lead on quality; Dupdub and Vidnoz win on accessibility; LipSync.video excels at technical precision.

The AI video market’s projected growth to $27.77 billion by 2030 reflects sustained demand for scalable, cost-effective video content. Talking photo ads are part of that trend, offering a production workflow that cuts traditional timelines by up to 80% while maintaining enough realism to drive engagement and conversions.

For marketers and creators evaluating these tools, the best approach is hands-on testing. Most platforms offer free trials or low-cost starter plans. Run small experiments, measure performance against current ad formats, and scale what works.

Ready to test talking photo ads in your campaigns? Start with a mid-tier platform matching your volume needs, run A/B tests against existing creative, and track performance metrics closely. The data will reveal whether the format suits your audience—and which platform delivers the best ROI for your specific use case.